Last month, I spent three hours on a campaign brief.

Not because the campaign was complex. Because I kept switching tabs.

Research in one window. Competitor analysis in another. Keyword data somewhere else. And every time I wrote a section, I had to check if it still aligned with decisions I'd made earlier.

But that wasn't even the real problem.

The real problem? I had no one to fight with.

Not fight in a bad way. Fight in the way you need when you're building something.

Someone who pushes back on your positioning angle. Someone who says "wait, that doesn't align with how you defined your ICP last week." Someone who tells you when your campaign strategy is solid and when it's missing a step.

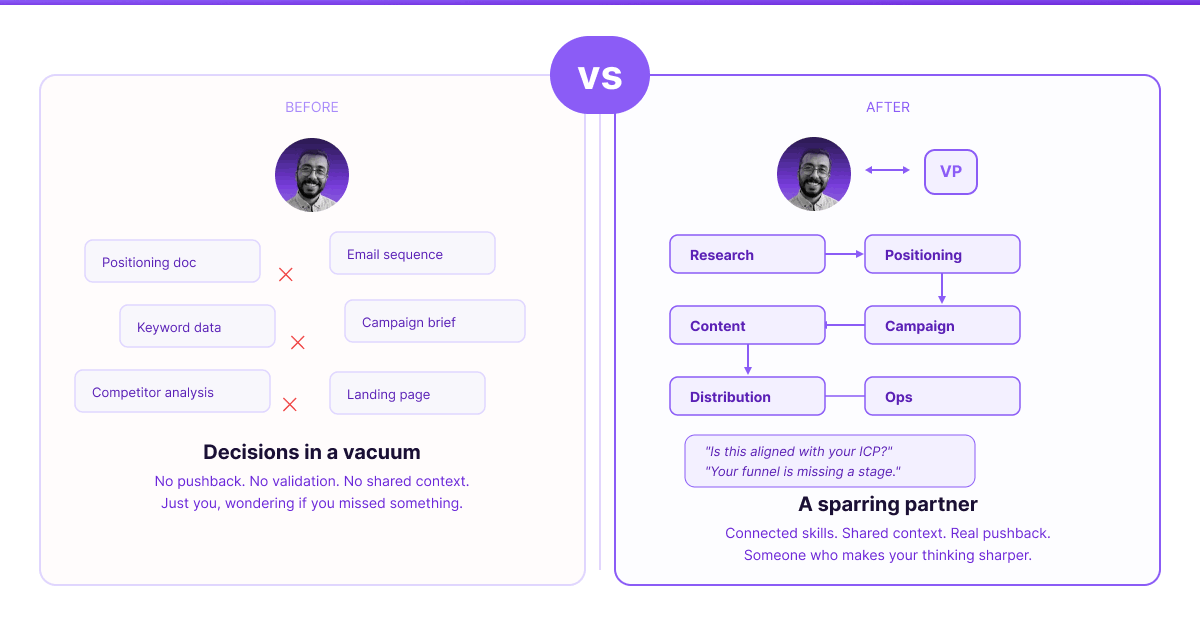

When you're a solo marketer or on a small team, you don't get that.

You're making decisions in a vacuum. You brainstorm alone. You build strategy alone. You ship and wonder if you missed something obvious — because there was no one in the room to say "have you thought about this?" before you committed.

I needed a sparring partner.

Someone who could brainstorm with me at 11pm when ideas are half-formed. Someone who'd tell me "actually, this positioning is strong, ship it" when I was second-guessing myself for the fifth time. Someone who'd ask "is this aligned with how B2B SaaS companies typically structure their ABM plays?" before I built something from scratch.

Not a tool that just says yes. A partner that makes me think harder.

Here's what changed.

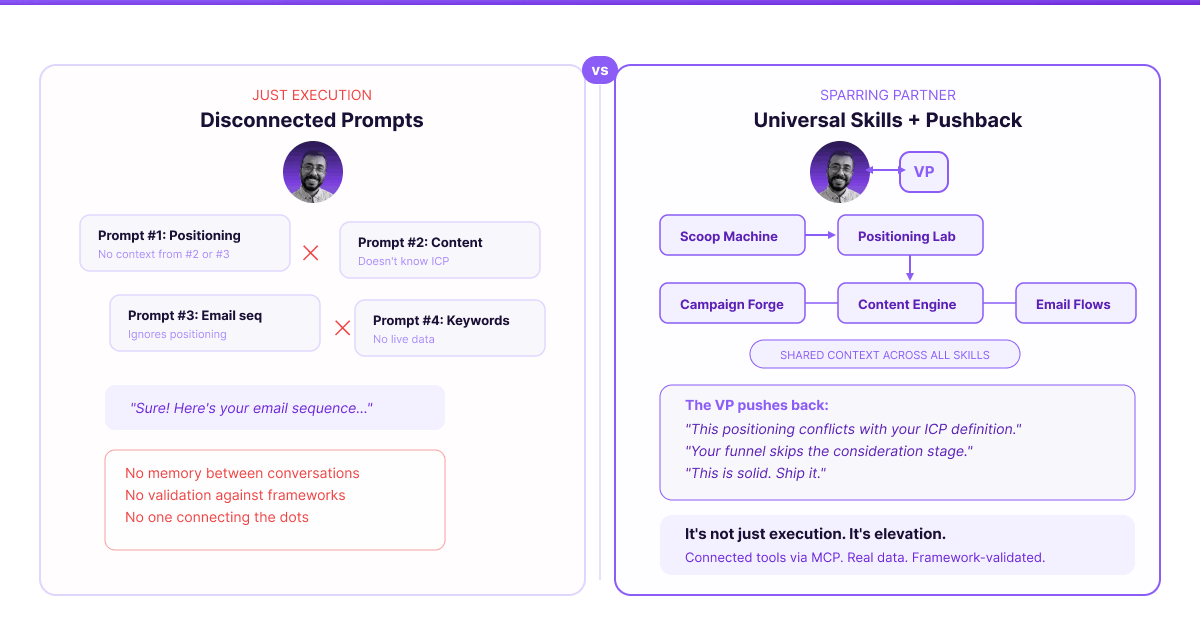

I stopped thinking about AI as a tool that answers questions. I started thinking about it as a team that thinks with me.

Not a collection of disconnected prompts I copy-paste between conversations. Those are useless. You ask one prompt for positioning, another for content, another for email sequences — and none of them know what the others said. There's no memory. No continuity. No one connecting the dots.

What if instead, I had universal skills?

Specialists who share context. Who know what the positioning doc says when they're writing email sequences. Who can pull real data from Ahrefs and check my calendar before suggesting a launch date. Who validate my thinking against actual marketing frameworks instead of just executing whatever I ask.

What if I had an AI team that actually talks to each other — and talks back to me?

That's the system I built.

18 specialized skills. One orchestrator. Real tool connections via MCP.

And most importantly: a sparring partner who fights with me, not just for me.

The Architecture: 5 Layers, 18 Specialists

Layer 1: Research & Strategy

Everything starts here. You can't position without understanding the market. You can't target keywords without knowing the competitive landscape.

Scoop Machine → Market research, ICP analysis, competitor audits

Output: Research report (.docx), content topics (.xlsx)

Positioning Lab → Category strategy, messaging architecture, value pillars

Output: Positioning brief, messaging framework

Keyword Intel → SEO research via Ahrefs MCP, content gaps, SERP analysis

Output: Keyword strategy, prioritized targets (.xlsx)

Layer 2: Campaign & GTM

Turns research into strategy. Campaign plans, launch timelines, ABM programs.

Campaign Forge → Campaign briefs, content calendars, channel strategy

Output: Campaign brief (.docx), calendar (.xlsx), Asana CSV

Launch Planner → Product GTM, launch timelines, announcement sequences

Output: Launch brief, timeline, runbook

ABM Architect → Account-based programs, personalized plays, tiered engagement

Output: ABM program doc, account tracker (.xlsx)

Layer 3: Content Production

Produces the assets. But only after strategy is locked.

Content Engine → Blog posts, whitepapers, case studies, landing pages

Output: Finished content (.md or .docx)

Social Studio → Company social content, post batches, carousels

Output: Post drafts, social calendar (.xlsx)

Sales Enabler → Battlecards, pitch decks, talk tracks, objection handling

Output: Sales materials (.docx, .pptx)

Layer 4: Distribution & Activation

Gets the content in front of people.

Email Flows → Nurture sequences, onboarding flows, outbound cadences

Output: Email sequences with copy

Outreach Engine → SDR playbooks, cold outreach strategy, multi-channel sequences

Output: Outbound campaign plans

Paid Briefs → Media plans, ad creative briefs, budget allocation

Output: Paid media brief, budget tracker

Event Planner → Webinar playbooks, event promotion, run-of-show docs

Output: Event brief, promotion plan

Partner Plays → Co-marketing campaigns, partner enablement, joint GTM

Output: Partner playbook

Layer 5: Operations & Measurement

Makes sure the machine runs.

Funnel Ops → Lead scoring models, MQL/SQL definitions, lead routing

Output: Scoring model, lifecycle docs

Data Hygiene → CRM cleanup, enrichment workflows, deduplication

Output: Cleaning playbook

Workflow Builder → Marketing automation specs for HubSpot, Marketo, Pardot

Output: Workflow diagrams, implementation guides

Metrics Dash → KPI frameworks, attribution models, reporting templates

Output: Dashboard design, reporting framework

The Orchestrator: VP of Marketing

Sits above all 18 skills. Doesn't do the work — coordinates who does what, in what order, with what inputs.

But more than that: challenges my thinking. Asks the questions I should be asking myself. Validates strategy against real frameworks before I go build something.

How It Actually Pushes Back

This isn't a one-way street where I ask and Claude executes.

It's more like having a thought partner who makes me sharper.

When I propose a positioning angle:

"How does this compare to how competitors are positioning? Are you going for category creation or point solution? Based on the ICP you defined last week, this messaging might be too technical for the economic buyer."

When I sketch a campaign plan:

"Does this timeline account for the content dependencies? You're launching paid before the landing page is live. Also, this budget allocation is heavy on LinkedIn — is that aligned with where your ICP actually spends time?"

When I'm second-guessing myself:

"This positioning is solid. The value pillars are differentiated, the messaging avoids the competitor trap you identified in the research phase. Ship it."

When I'm missing something:

"Your funnel goes straight from awareness content to demo request. Most B2B SaaS funnels have a consideration stage in between — a guide, a comparison page, something that handles objections before the sales conversation. Want me to map out what that might look like?"

It's not just execution. It's elevation.

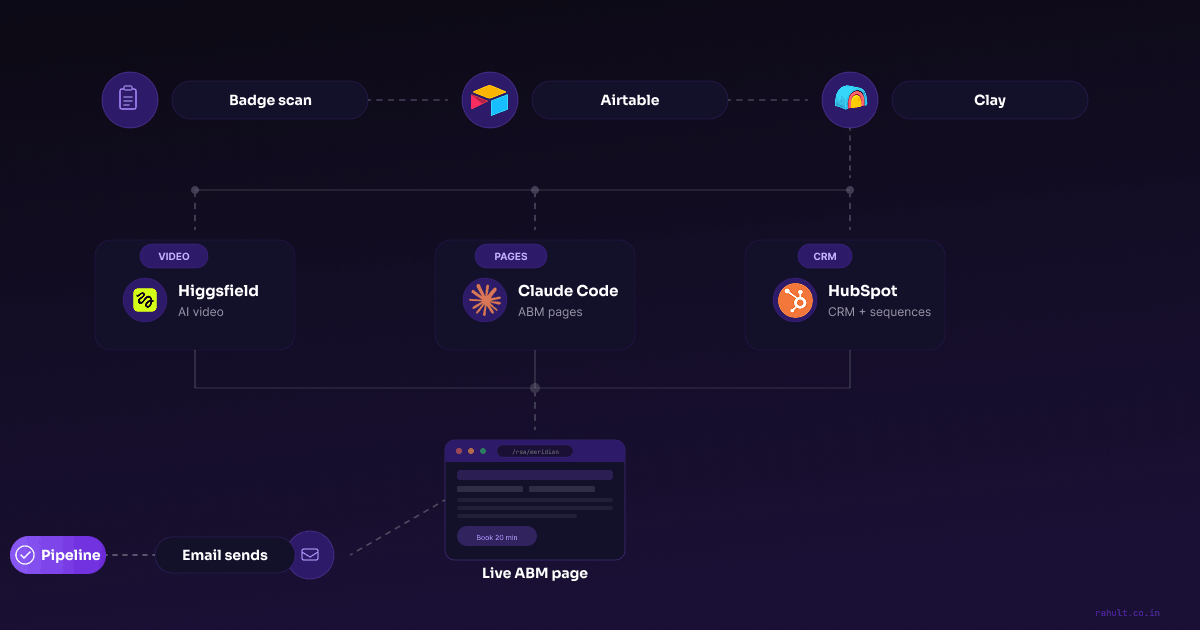

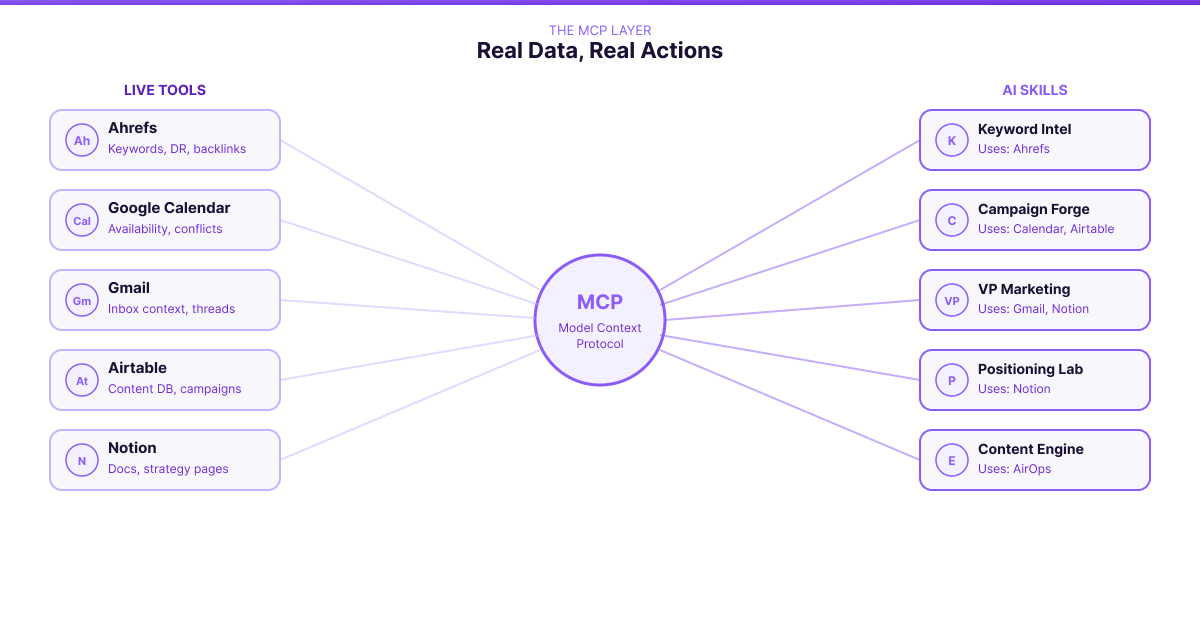

The MCP Layer: Real Data, Real Actions

Here's where it gets interesting.

Several skills aren't just Claude thinking — they're Claude connected to live tools through MCP (Model Context Protocol).

What's connected right now:

Ahrefs → Real keyword difficulty, organic traffic, competitor gaps

Used by: Keyword Intel

Google Calendar → Check availability, avoid conflicts, see what's coming

Used by: Campaign Forge, Launch Planner, Event Planner

Gmail → Search inbox for context, see active conversations

Used by: VP Marketing, Outreach Engine

Airtable → Content database, campaign records, lead tracking

Used by: Scoop Machine, Campaign Forge, Metrics Dash

Notion → Company docs, strategy pages, positioning briefs

Used by: Positioning Lab, VP Marketing

AirOps → Content workflows, brand voice, persona definitions

Used by: Content Engine, Social Studio

What this actually means:

When Keyword Intel analyzes competitors, it pulls real Domain Ratings from Ahrefs. Not estimates. Actual data.

When Campaign Forge builds a timeline, it checks my Google Calendar before suggesting dates.

When Positioning Lab references our messaging, it pulls from the live Notion doc — not a copy-paste I forgot to update.

The AI isn't guessing. It's working with real information.

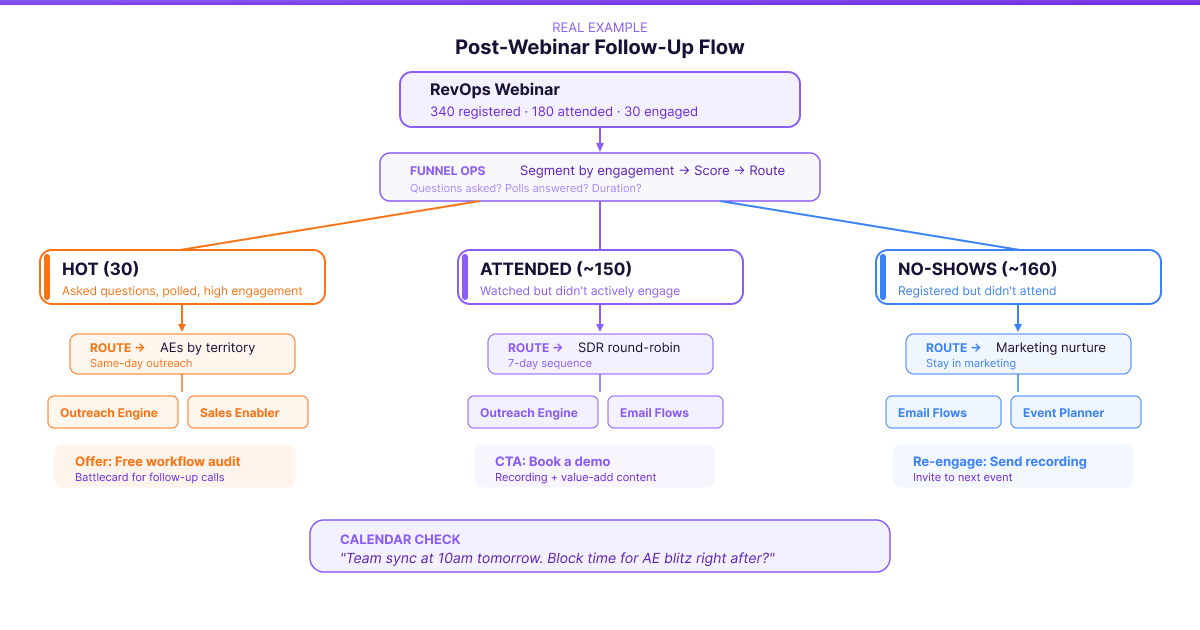

A Real Example: "We Just Ran a Webinar. Now What?"

Me: "We just wrapped our RevOps webinar. 340 registered, 180 attended. Help me build the follow-up."

VP Marketing doesn't just start writing emails. It asks:

"What was the engagement like? Did anyone ask questions or hit the polls? What's the offer — are we pushing demos, or nurturing to a different asset?"

Me: "About 30 people asked questions or answered polls. We want to offer a free workflow audit to hot leads. Everyone else gets a path to demo."

VP Marketing coordinates:

"Got it. Let me break this into segments first.

Hot leads (30): Asked questions, polled, high engagement. These go straight to AEs for same-day outreach. Offer: free workflow audit.

Attended but passive (~150): Watched but didn't engage. SDR sequence over 7 days, recording plus value-add content, CTA is book a demo.

No-shows (~160): Registered but didn't attend. Marketing nurture — send the recording, re-engage, invite to next event.

For routing, I'll check your CRM structure. Hot leads should route by territory to the assigned AE. Attended goes to SDR round-robin. No-shows stay in marketing.

Does this segmentation make sense, or do you want to define 'hot' differently?"

After we align on segments:

"Here's the sequence:

- Funnel Ops — segment the list by engagement signals, score leads, set routing rules

- Outreach Engine — build AE talk track for hot leads, SDR sequence for attended

- Email Flows — no-show nurture sequence, recording access, re-engagement

- Sales Enabler — give AEs a quick battlecard on the webinar topic for follow-up calls

I'm checking your calendar... you have a team sync tomorrow at 10am. Want me to block time for the AE call blitz right after, while leads are still warm?"

Funnel Ops outputs the segmented list with routing rules. Outreach Engine builds the multi-channel cadences. Email Flows creates the nurture sequences. Everything references the same context — who attended, what they engaged with, what the offer is.

What the VP Actually Did

Notice what happened there. The VP didn't just execute. It:

- Asked clarifying questions first — "What was engagement like? What's the offer?"

- Proposed a segmentation logic — hot vs attended vs no-show, based on engagement signals

- Suggested routing rules — territory for AEs, round-robin for SDRs

- Validated the approach — "Does this make sense, or do you want to define 'hot' differently?"

- Checked real constraints — looked at my calendar before scheduling the call blitz

This is the sparring. It's not just doing what I asked. It's making sure what I asked is the right thing to do.

Inside the VP Skill

Here's a glimpse of how the orchestrator thinks. This is the mental model, not the full prompt:

When someone asks for strategic help, I:

1. UNDERSTAND THE OBJECTIVE

What are they trying to achieve? What does success look like?

2. CHALLENGE THE THINKING

Does this align with their ICP definition? Is this consistent

with positioning decisions? What frameworks apply here?

3. CHECK DEPENDENCIES

What foundational work exists? What needs to happen first?

4. SEQUENCE THE WORK

Which skills run first? Where are the checkpoint moments?

5. VALIDATE AGAINST FRAMEWORKS

Is this funnel structure standard for B2B? Does this campaign

plan follow best practices?

6. PUSH BACK OR CONFIRM

If solid: "This is aligned. Ship it."

If gaps: "Here's what I'd reconsider..."

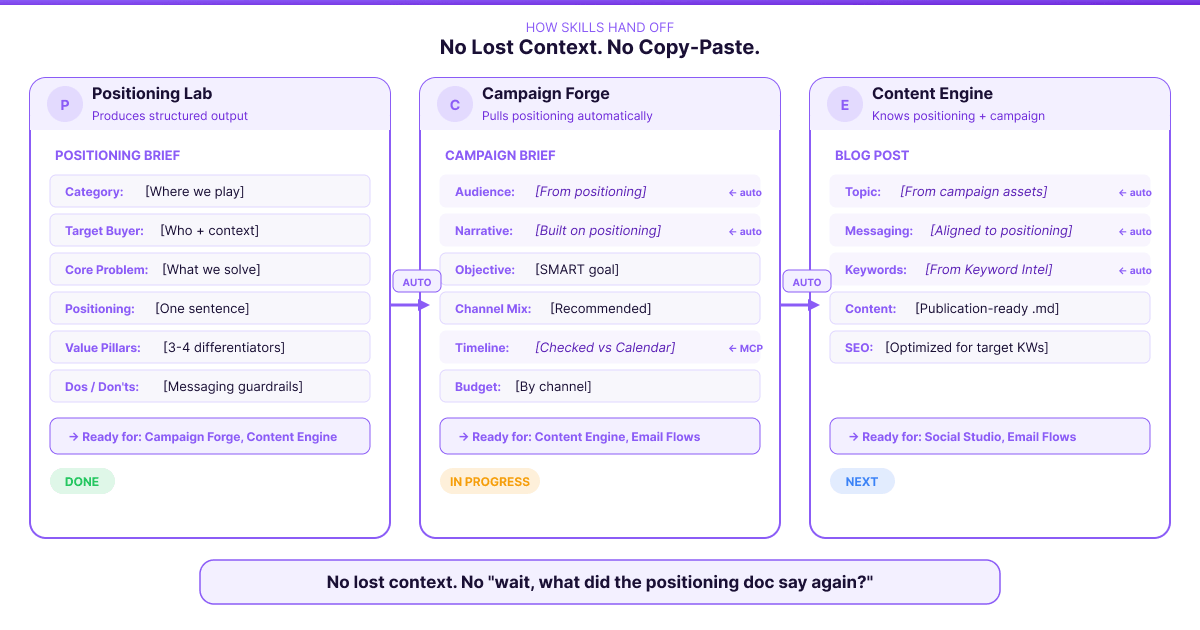

How Skills Hand Off

When Positioning Lab finishes, it produces structured output other skills can consume:

POSITIONING BRIEF HANDOFF

Category: [Where we play]

Target Buyer: [Who we serve + their context]

Core Problem: [What we solve]

Positioning Statement: [One sentence]

Value Pillars: [3-4 differentiators]

Messaging Dos: [What to emphasize]

Messaging Don'ts: [What to avoid]

>> Ready for: Campaign Forge, Content Engine, Sales Enabler

When Campaign Forge picks this up, it doesn't start from scratch:

CAMPAIGN BRIEF

[Pulls positioning automatically]

Objective: [SMART goal]

Audience: [From positioning]

Narrative: [Built on positioning statement]

Channel Mix: [Recommended + rationale]

Timeline: [Checked against Calendar]

>> Ready for: Content Engine, Email Flows, Paid Briefs

No lost context. No "wait, what did the positioning doc say again?"

My Take

This isn't about AI doing your marketing. It's about having someone in your corner who makes you better.

Before this system, I was making decisions alone. Writing strategy docs that no one reviewed. Building campaigns that no one pressure-tested. Second-guessing myself constantly because there was no one to say "yes, this aligns with how SaaS companies typically structure their ABM plays" or "no, your funnel is missing a stage."

Now I have a sparring partner.

When I propose a positioning angle, the system doesn't just say "great idea!" It asks: "How does this compare to how competitors are positioning? Is this a category creation play or a point solution play?"

When I build a campaign plan, it checks: "Does this timeline account for content dependencies? Is your budget allocation aligned with typical B2B benchmarks?"

When I'm right, it tells me. When I'm wrong, it tells me that too.

It's like having a full marketing team, except:

- They actually push back on your thinking

- They validate strategy against real frameworks

- They remember what you decided last week

- They connect research to positioning to campaigns to content

- You can iterate at the speed of conversation

Small teams with smart systems can outperform anyone. But not because AI does the work for you. Because it makes your thinking sharper.

What I'm Still Building

This isn't finished. Not even close.

The Creative Gap

Right now, the 18 skills cover research, strategy, content, distribution, and ops. But there's a hole in the middle: creative.

I don't have a skill that can look at a landing page and tell me if the visual hierarchy is off. I don't have one that analyzes why a competitor's carousel outperformed ours. I don't have a creative director who understands brand consistency across touchpoints.

That's next.

I'm building creative skills grounded in real analyzed case studies. Not generic "make it pop" advice — actual breakdowns of what works and why. Teardowns of high-performing ads, landing pages, emails. Pattern recognition from campaigns that actually converted.

The goal: a creative sparring partner who can say "this headline buries the value prop" or "this visual flow fights the reading pattern" or "based on similar campaigns, this CTA placement typically underperforms."

Other Things on the Roadmap

-

Skill-to-skill automation: The VP recommends sequences, but I still manually invoke each skill. True orchestration means the VP calls skills automatically, checkpointing with me only at key decisions.

-

More connectors: HubSpot for CRM data. Slack for notifications. Make for automation triggers. The MCP ecosystem keeps expanding.

-

Feedback loops: When a campaign performs well or poorly, that should feed back into how the system thinks about the next one. Learn from what actually worked.

-

Memory across sessions: Better ways to persist context so I'm not re-explaining my ICP every conversation.

Build Your Own

If you want to build something like this:

Step 1: Map your workflows Before writing any prompts, document how you actually do marketing. What depends on what? Where do you lose context?

Step 2: Identify your specialists Group related tasks into roles. Who does research? Strategy? Content? Ops? Each becomes a skill.

Step 3: Design the handoffs For each skill: What inputs does it need? What outputs does it produce? What format lets the next skill use them?

Step 4: Build the orchestrator The VP needs to know all specialists, the dependencies, and when to invoke each. More importantly: what questions to ask, what frameworks to validate against.

Step 5: Connect real tools Look at Claude's MCP connectors. Which match tools you use? Which skills benefit from live data? Start with 2-3.

Step 6: Teach it to push back The hardest part. Your orchestrator shouldn't just execute — it should challenge. Build in the questions you wish someone would ask you.

Anyone else building something like this?

Not just prompts. Systems. Interconnected skills that share context, connected to real tools, with actual orchestration logic.

More importantly: anyone building AI that pushes back? That doesn't just execute, but challenges your thinking?

I'd love to see how others are approaching this. What's working? What's missing? What sparring partners have you built?

Send me a message on LinkedIn: linkedin.com/in/peacenikrahul92